A towel swan blinks at you from a hotel bed. Then it hops off and waddles toward the door like it’s late for checkout.

That’s the kind of thing you can ask an AI video model to create in 2026. The question is: which one actually pulls it off?

The last time I ran a proper AI video comparison was October 2024, when I pitted Runway, Kling, Luma, Pika and MiniMax against each other. Back then, the results were impressive for their time but also hilariously flawed. Melting faces, physics-defying objects, and the occasional extra finger were par for the course.

Eighteen months later, the landscape has shifted dramatically. Some of those original contenders have faded. New players have emerged. And the ones that stuck around? They’ve levelled up in ways I genuinely didn’t expect.

So I renewed my RunwayML subscription, fired up four other models, and designed three hotel-themed tests specifically to push each one to its limits. The result? Some genuine surprises, a few laughs, and one towel swan that will haunt my dreams.

The Contenders

Five models. Three tests each. Fifteen videos total.

Runway Gen-4.5 from Runway (New York). Currently the top-rated model on the Artificial Analysis leaderboard with an Elo score of 1,247. Known for stunning visual fidelity and cinematic quality. Notably the only model in this test that doesn’t generate native audio.

Kling 3.0 Pro from Kuaishou (China). Released February 2026 with native 4K output, multilingual audio, and what reviewers are calling the best motion physics in the business. Generates 5, 10, or 15 seconds per clip.

Seedance 2.0 from ByteDance (China). The most talked-about model of 2026, and not just for its capabilities. When it first appeared in China in February, it went viral almost overnight with hyper-realistic clips featuring well-known actors and characters. Hollywood responded fast: Disney sent a cease-and-desist letter, Paramount accused ByteDance of “blatant infringement,” and two US Senators wrote to ByteDance’s CEO demanding they shut it down. ByteDance paused the global rollout, added safeguards (blocking real-face generation, C2PA watermarks, third-party red-teaming), and finally relaunched globally in April. Currently ranked #1 on Arena for text-to-video with an Elo of 1,450. Supports multi-shot storytelling and phoneme-level lip-sync in 8+ languages. Generates up to 15 seconds.

Gemini Veo 3.1 from Google DeepMind. Now available free (10 generations per month per Google account). Strong on prompt adherence and those smooth interior-to-exterior transitions that most models fumble.

Wan 2.6 from Alibaba (China). Open-source, affordable ($0.04/second), and packed with features including reference-to-video character consistency and native audio sync. Released March 2026.

Worth noting: three of these five models are Chinese-made. A year ago, the conversation was dominated by American and European tools. That’s changed fast.

A quick word on cost. I ran all my tests through RunwayML’s unlimited plan ($95/month), which lets you access Runway, Kling, Seedance, and Wan in Relaxed mode generation at no extra cost per clip. The exception is Veo 3.1, which burns through credits fast at 320 credits per 8-second clip. Something to factor in if you’re planning to iterate (and you will iterate, a lot).

The Tests

I didn’t want to just generate pretty landscapes and call it a day. I wanted tests that would expose real differences between models, tests that hotel marketers could actually learn from, and (let’s be honest) tests that would be fun to watch.

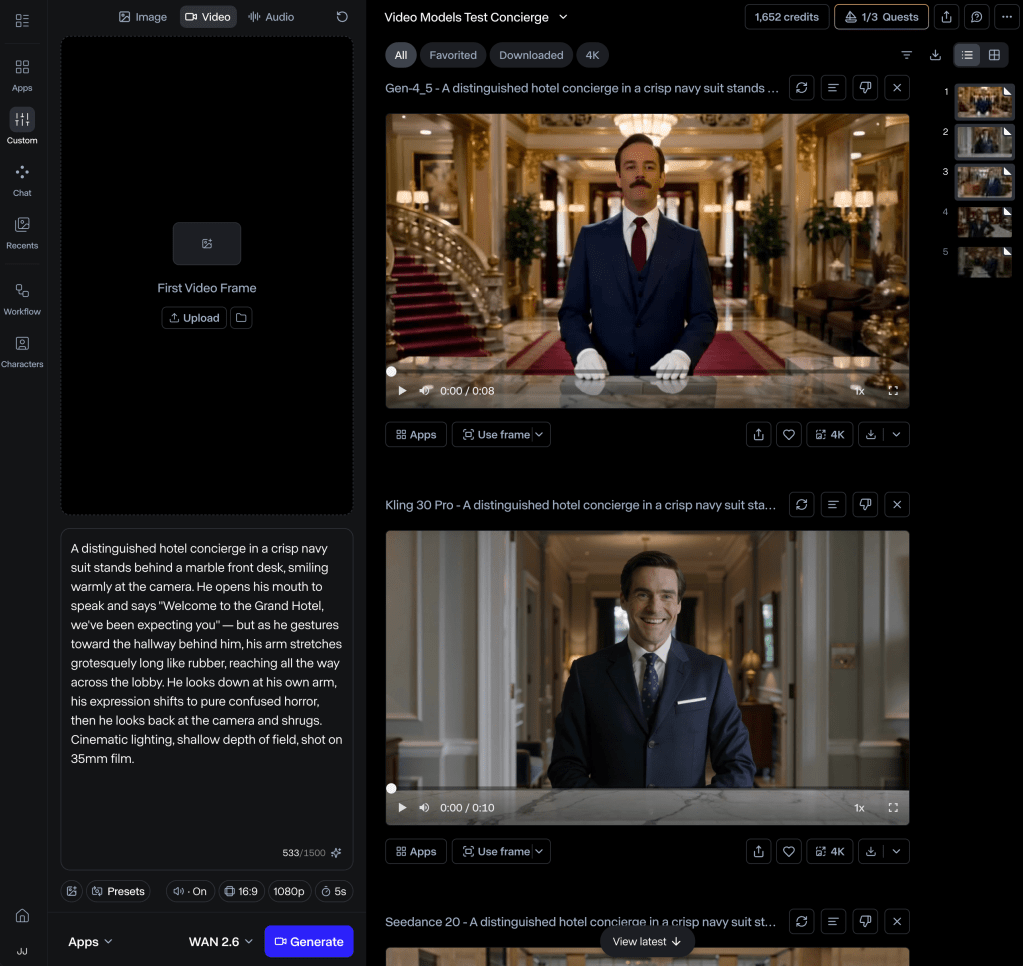

Test 1: The Concierge (Text-to-Video)

A hotel concierge welcomes you to camera, delivers a line of dialogue, then his arm stretches grotesquely across the lobby like rubber. He notices, looks horrified, then shrugs. This tests speech, facial expression, human anatomy, and the ability to handle deliberate surrealism.

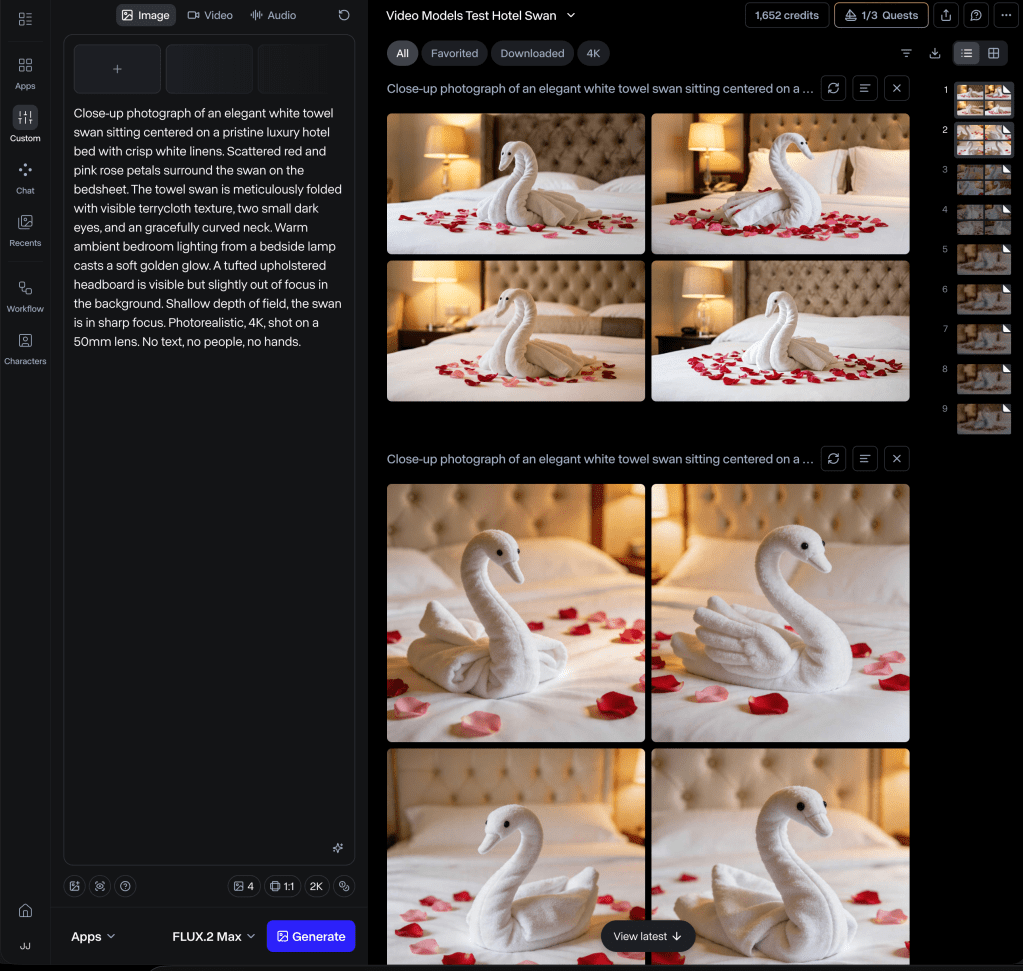

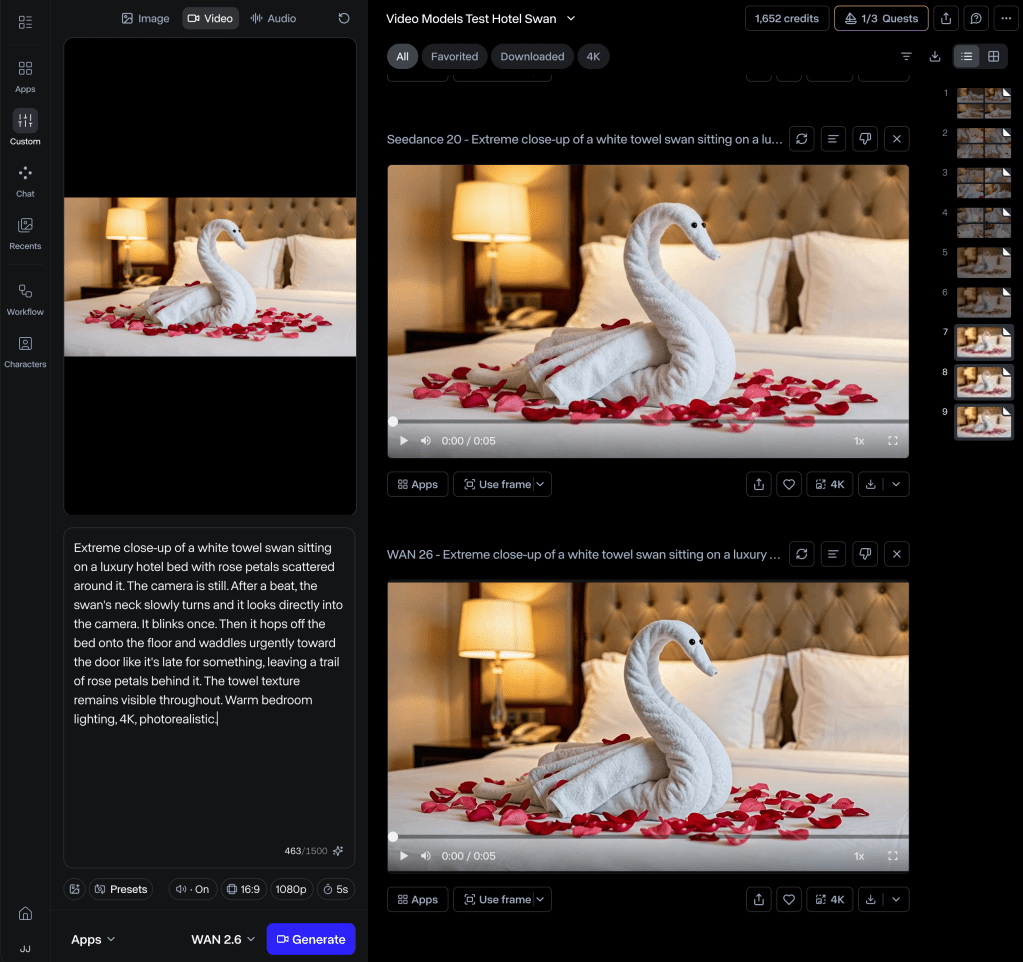

Test 2: The Towel Swan (Image-to-Video)

Starting from a single AI-generated image of a towel swan on a hotel bed, each model had to bring it to life: make it turn its head, blink, hop off the bed, and waddle toward the door. This tests animation from a still frame, material consistency, and physics.

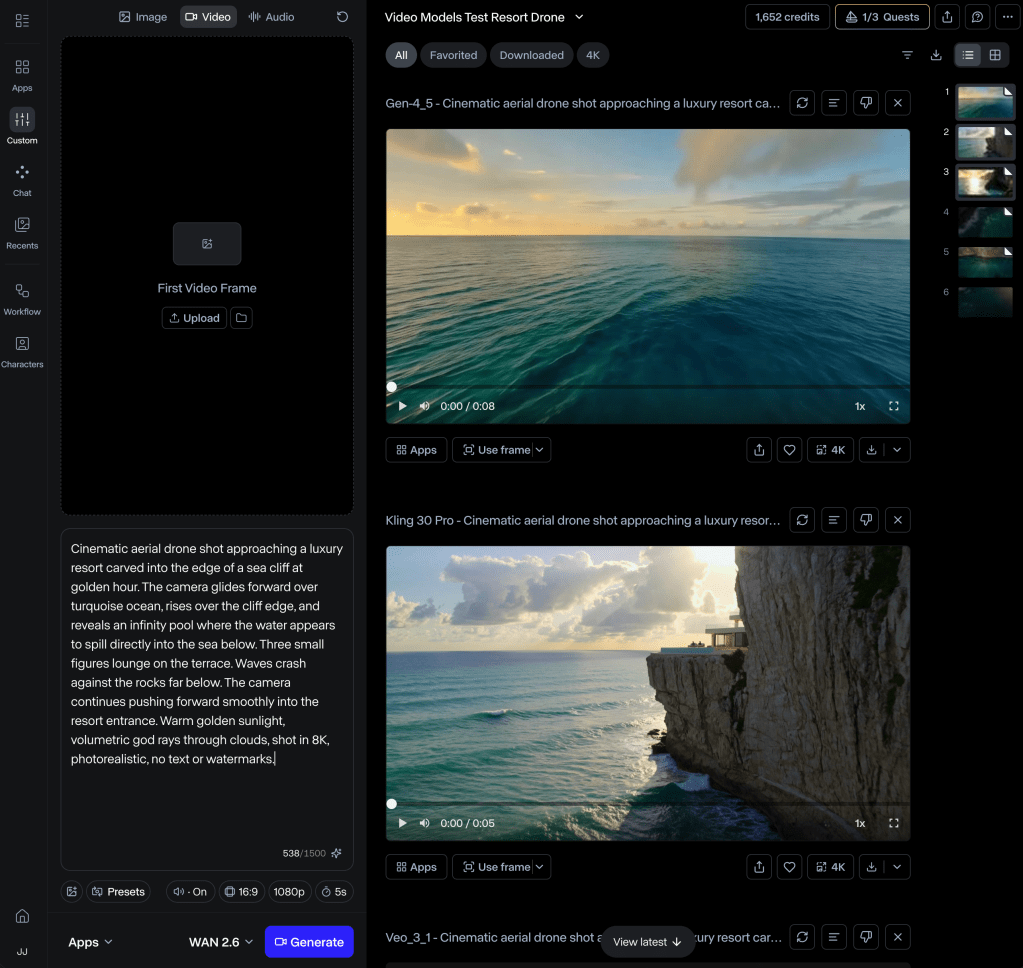

Test 3: The Impossible Resort (Text-to-Video)

A cinematic drone shot approaching a cliffside resort at golden hour, gliding over the ocean, rising over the cliff edge to reveal an infinity pool, then pushing forward into the resort entrance. This tests camera movement, landscape generation, water physics, and scale.

I used the exact same prompt for all five models in each test. No tweaking, no optimising for individual platforms. Apples to apples.

For the AI-powered evaluation, I fed all fifteen videos into Google Gemini with a structured scoring rubric across seven criteria. Fair warning: Gemini evaluating its own Veo 3.1 output isn’t exactly a neutral judge (I’ll flag where I think the scores were generous). My own human observations are layered in throughout.

You can watch all the clips in the embedded video below, but here’s what stood out.

Test 1: The Concierge

The headline finding: none of them nailed the rubber arm.

This was the hardest test by design. I was asking models to intentionally break human anatomy in a specific, controlled way. Kling came closest, turning the arm into something genuinely unsettling (borderline horror movie territory). Seedance gave it a strange stick-like extension. Runway produced a weird arm-suit hybrid. Wan and Veo essentially ignored the instruction entirely.

What surprised me: only Veo chose a female concierge. Every other model defaulted to a man. Make of that what you will.

All five handled the dialogue reasonably well, except Runway, which is currently the only model that doesn’t generate audio at all. Wan’s audio was a bit tinny and loud.

My pick for this scene: Kling or Seedance, with a few more generation attempts to refine the arm effect. Kling had the best comedic timing. Seedance had the most complete sequence.

Test 2: The Towel Swan

This was the most fun to watch. And the most revealing.

Before feeding a first frame to the video models, I tested four different image generators to create the towel swan itself: Nano Banana 2, Seedream 5.0, Runway Gen-4, and FLUX2 Max. All four produced decent results, but with interesting differences. Seedream created the prettiest swans but they looked too polished, more like real birds than something a housekeeping team would fold from bath towels. Gen-4 gave the swan a realistic-looking beak, which is impressive until you remember it’s supposed to be made of terrycloth. FLUX2 Max was solid but the overall aesthetics didn’t quite pop. Nano Banana 2 won for me because it nailed the thing that mattered most: it looked like an actual towel swan you’d find in an actual hotel room, complete with visible terrycloth texture and small bead eyes. Those eyes turned out to be critical, because without them the video models had nothing to animate.

Runway delivered beautifully. It even added little black feet to the swan (not in the prompt, but it made the waddle more believable). No audio, but visually? It felt like watching a Pixar short.

Kling was probably my favourite, tied with Veo. Kling’s lighting and textures were gorgeous. Veo was the only model that remembered to leave a trail of rose petals behind the swan, which showed impressive prompt memory for environmental details.

Seedance struggled with continuity. I ran a 5-second output which may not have been long enough for the full sequence, so I’ll give it the benefit of the doubt.

And then there’s Wan. Wan decided the towel swan needed actual bird legs. Real, textured, anatomically plausible bird legs sprouting from a folded towel. It was technically impressive and deeply cursed. If “towel body horror” is a genre, Wan just invented it.

My personal pick for this scene: Runway for pure charm, Veo for prompt adherence, Wan for unintentional comedy gold.

Test 3: The Impossible Resort

This is the one hotel marketers should pay closest attention to.

If you’ve ever priced a professional drone shoot for a resort property, you know it starts around $3,000 and climbs fast. So the question isn’t academic: could any of these models produce something a hotel brand could actually use?

Runway was visually stunning. Gorgeous colour grading, buttery smooth camera movement. But the resort and cliff sort of magically materialised during the sequence rather than being revealed, and the people on the terrace looked a bit… incomplete. Like mannequins someone forgot to finish rendering.

Kling’s water physics were arguably the best of any model. The waves crashing against rocks looked genuinely real. But it chose a side-on fly-by instead of the forward approach I asked for, and I only ran 5 seconds, so it couldn’t complete the full scene.

Veo scored well on prompt adherence (it was the only one that attempted the exterior-to-interior transition into the lobby). But it used cuts instead of one smooth continuous shot, and the colours felt oversaturated to my eye. I suspect Gemini’s evaluation was a touch generous here, for reasons that should be obvious.

Seedance showed promise but felt like 2023-era output compared to the top three, with jittery camera movement and flat textures.

Wan started with an awkward half-submerged camera angle and the architecture started warping as the camera pushed forward. Beautiful sea foam, though.

My personal pick for this scene: A longer Kling or Seedance generation (both support up to 15 seconds), or Veo as a backup. With a 10 or 15-second Kling output, I think this could genuinely rival a mid-range drone shoot.

The Scorecard

If I had to rank them overall across all three tests:

Kling 3.0 Pro takes the top spot for me. Strongest physics, best comedic timing, most consistent quality across all three tests.

Runway Gen-4.5 is a close second. Visually the most cinematic and polished, and the towel swan output was pure magic. The lack of audio is a real gap though, especially when every competitor now offers it.

Veo 3.1 comes third. Best prompt adherence overall, and the free tier makes it the most accessible entry point for anyone wanting to experiment, however if you’re paying for generation, the costs add up quick. It plays things safe but delivers solid, usable results.

Wan 2.6 is fourth. The open-source model with the most raw potential, let down by occasional architectural instability and some, shall we say, creative anatomical decisions. At $0.04 per second though, the price-to-quality ratio is hard to argue with.

Seedance 2.0 lands fifth in my tests (this time), but this one deserves a longer conversation. It only launched globally days ago, I ran shorter outputs for some tests, and its #1 Arena ranking suggests it’s capable of considerably more than what I captured here. There’s also the elephant in the room: the model that went viral in February, the one that had Hollywood reaching for its lawyers, was almost certainly more capable than the version we’re using today. ByteDance added significant guardrails before relaunching, and I’d be surprised if some of the raw creative power wasn’t dialled back in the process. That’s not a criticism. It’s the reality of shipping a product when the legal and ethical landscape is still being drawn. I’ll be spending more time with Seedance over the coming weeks and will do a proper deep-dive evaluation. Watch this space.

What This Means If You Work in Hotels

Eighteen months ago, these tools were fascinating toys. Today, they’re approaching the point where a scrappy marketing team could produce B-roll, social content, and concept videos without a production budget. We’re not at “replace your agency” yet. But we’re closer than most people in the industry realise.

The practical takeaway? Pick one model. Learn it properly. Start experimenting on low-stakes projects (social posts, internal presentations, mood boards for creative briefs). The gap between “someone who’s been playing with these tools for six months” and “someone who just heard about them” is going to be a career-defining advantage over the next couple of years.

One thing worth saying clearly, and the Seedance saga is a good reminder of this: with great power comes the need to use it thoughtfully. These tools make it trivially easy to create photorealistic video of places that don’t exist, services that aren’t offered, and experiences that were never delivered. That’s a creative superpower and an ethical minefield in the same package. Be transparent about what’s AI-generated. Follow your company’s policies. Check your local advertising regulations. And remember that in hospitality, trust is the product. A guest who shows up expecting the resort from your AI video and finds something different won’t just be disappointed, they’ll tell everyone. Use these tools to inspire, to concept, to experiment. Not to deceive.

A towel swan waddles toward the door. It’s silly. It’s impressive. It’s also a preview of where marketing content is heading.

You can stand in the hallway and watch it walk past, or you can be the one who learned how to make it move.

All fifteen video clips are available in the embedded YouTube comparison video above. Prompts, scoring methodology, and the full evaluation rubric are available on request.

Have you tested any of these models? I’d love to hear which ones you’ve had the most success with, and what you used them for.

Discover more from Hotelemarketer by Jitendra Jain (JJ)

Subscribe to get the latest posts sent to your email.

0 comments on “The 2026 AI Video Shootout: A Towel Swan, a Rubber Arm, and Five Models Walk Into a Hotel”