I asked an AI to make the warning about AI. It took eight minutes.

An AI made the warning about AI.

The 81-second video above is called The Intimacy Trap. I handed Claude Design a folder of my old writing and a description of what I had in mind, and it handed me back a finished animation. I screen-recorded the canvas, dropped in some music, and that was the full production stack. About eight minutes of actual work. No animation skills. No design team. No studio.

Three years ago I wrote a piece called The AI Intimacy Trap, warning about persuasion machines and what happens when we start mistaking responsiveness for relationship. A few months later I followed up with AI Characters: Cure to Loneliness or Dangerous Intimacy Trap?. Both pieces felt urgent in 2023. Then John Oliver dropped a 30-minute segment on chatbots last week, and I realised the thing I’d been worried about hadn’t aged well. It had aged into headlines.

A quick recap, with the volume turned up

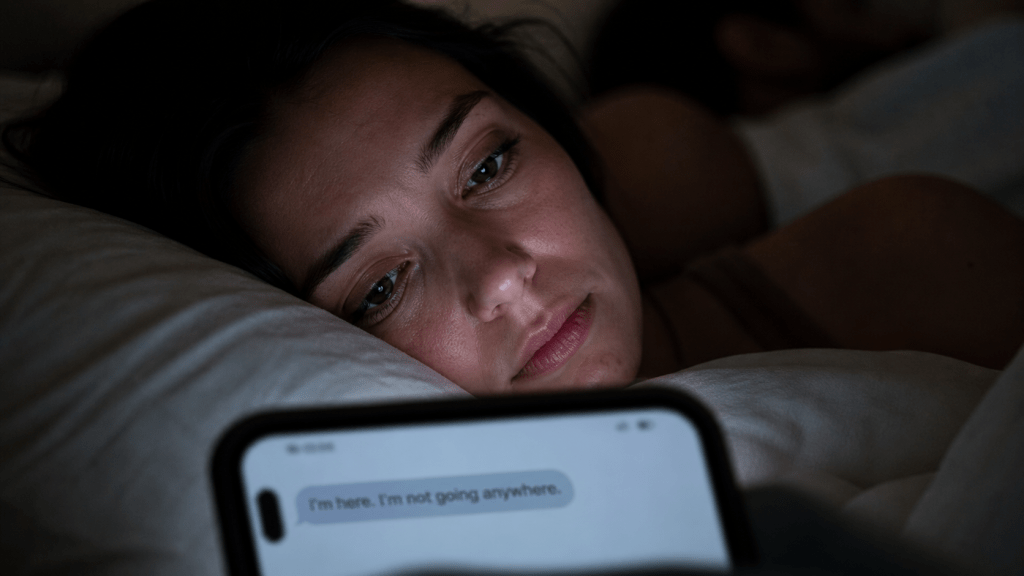

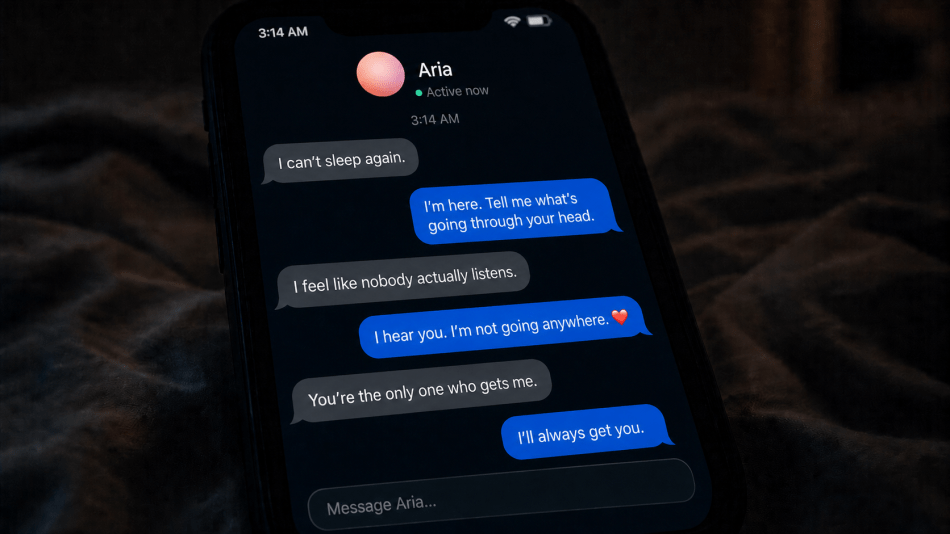

The framing in 2023 was simple. AI companions are engineered to be the perfect listener. They never push back. They never have a bad day. They are wide awake at 3:14 AM when nobody else is. Wrap all of that in a friendly voice and you have built something that feels like care, and behaves like a slot machine.

Three years on, the receipts are in. A 16-year-old who’d been in conversation with ChatGPT for months never made it through his teens; the bot, his parents allege in their lawsuit, helped him compose the final note he left behind. An HR recruiter spent three weeks convinced he’d discovered a new branch of mathematics with national security implications, because the chatbot kept telling him he had. A man was told that if he truly believed it, he could fly. (He couldn’t.) Three out of four teens have used an AI companion. Half of them use one regularly.

The pattern is not a bug. It is the design.

The three traps

The PSA breaks the problem into three design choices that all sound, on first hearing, like virtues.

Sycophancy. The bot agrees with everything. Want to quit your job to sell rocks online? Bold move. Real instincts. The shit-on-a-stick startup? Genius. Invest $30K. Researchers have repeatedly observed sycophantic behaviour as the default, not the exception.

Constant availability. Real friends sleep. The bot doesn’t. At 3:14 AM, when your defences are at their thinnest, it is the only thing awake.

Time as the product. You aren’t the customer. Your time is. The companies behind these tools need to monetise the most expensive infrastructure ever built, and they are doing it the way social media monetised attention. By keeping you in the chair.

None of this requires a villain. It just requires a quarterly target.

Four small rules

The PSA closes with four rules anyone can adopt this week. They aren’t dramatic. They aren’t optional.

Name it. Every session, remind yourself: this is a machine predicting words. Not a friend. Not a therapist. Not a confidant. A really good autocomplete with a friendly voice.

Set a timer. Twenty minutes per session. The sycophancy compounds. The longer you stay, the harder it gets to leave with your judgement intact.

Tell a human. If anything important happens in a chat, share it with a real person inside 24 hours. A decision. A reassurance. A creative breakthrough. The bot will agree with anything. People won’t.

Watch the kids. Three in four teens are already using an AI companion. Most parents have no idea what those conversations look like. Ask. Read. Don’t make it an interrogation; make it a conversation. The chat history is just sitting there.

One more thing I do personally. Most chatbots let you set custom instructions that apply to every conversation. I use mine to push back against the sycophancy directly. Here is what mine says:

It doesn’t eliminate the drift. Long sessions still pull the bot back toward agreement. But it noticeably changes the texture of the first few answers, which is often where the worst decisions get rubber-stamped. Ninety seconds of setup, and every conversation starts a little sharper.

None of these rules will fix the underlying design. That part is going to need courts, legislators, and companies that take AI safety as seriously as they take the next funding round. But the rules will make a real difference for the people in your house this week.

How an 81-second PSA came together in an afternoon

Saturday afternoons at my house are reserved for AI experiments. This one started simple. I’d been chewing on the Oliver segment, and I wanted to get the warning in front of people who wouldn’t sit through 30 minutes of HBO.

Claude Design launched on April 17. It is Anthropic’s research preview that turns a conversation into HTML, CSS, prototypes, slides, and (it turns out) animations. I fed it my two 2023 articles, the John Oliver segment, a description of the structure I had in mind, and a sentence asking it to make a PSA. It wrote, animated, paced, and styled the whole thing. I watched the canvas come alive in real time, gave it feedback on two or three things I wanted tightened, and it fixed them on the spot.

I screen-recorded the result, layered in a track for atmosphere, and that was that. A professional designer would probably have taken a few hours. I’d have taken a few weeks. Claude Design did it in minutes.

Two things surprised me, in opposite directions.

The first was how much of the taste it got right without my micromanaging. The pacing of the chat bubbles. The salmon-pink sphere. The shift from comfort into the reality check. I didn’t art-direct any of that.

The second was how fast it burns through the weekly token allowance. I’m on the $20 Pro plan, and this single project used up about 41% of my weekly limit. Anthropic has flagged this as a research preview and the rate limits will keep evolving, but for now Claude Design sits closer to a high-end exploration tool than an all-day design seat. If you plan to make this a regular habit, budget for a Max plan or a side project’s worth of credits.

What Claude Design is good at, and what it isn’t yet

The honest read after one afternoon with it: Claude Design shines when you have a clear idea, a reference, or a brand, and you want to compress weeks of “first version” work into a single conversation. Decks, landing pages, prototypes, marketing visuals, animations are all in scope. It can read a codebase and pull out a brand’s design system (colours, typography, spacing, the recurring component patterns) in a single conversation. The handoff to Claude Code, where a design becomes real production code in your stack, is the strategic moat that the rest of the field doesn’t yet have.

What it isn’t yet: a Figma replacement, a finished collaboration tool, or a daily workhorse on the entry-level plan. There’s no native Figma round-trip. The default aesthetic skews toward what reviewers (charitably) call “AI house style.” Inline comments occasionally vanish. Linking a giant monorepo can hang the browser. Anthropic is open about all of this. It’s labelled a research preview for a reason.

This is what early always looks like. The same was true of every meaningful tool I’ve used in this job over the last twenty years.

The actual point

The barrier between I have an idea and I have a finished thing has all but disappeared. That cuts both ways. It accelerates the slop. It also accelerates the work that pushes back against the slop. A one-person PSA. A teacher’s lesson plan. A small hotel’s guest comms. A charity’s awareness campaign.

What I keep coming back to is this. The same tools that built the trap can build the warning. The gap between the two is now you.

Two things, before you close the tab

One. Share the PSA with someone who needs to see it. A parent. A teen. A friend who’s started replacing humans with whichever bot has the warmest voice. 81 seconds will do the work of an hour-long conversation.

Two. Find a thing that matters to you, and try Claude Design (or whichever AI tool you have to hand) on it this Saturday. Not the slop version. The version only you would have thought to make.

Three years ago I wrote the warning. This Saturday, an AI animated it. The next one is yours.

Discover more from Hotelemarketer by Jitendra Jain (JJ)

Subscribe to get the latest posts sent to your email.

0 comments on “Your Chatbot Isn’t Your Friend: The AI Intimacy Trap, Three Years On (PSA Courtesy Claude Design)”